Microsoft Dynamics AX 2012 – File Exchange (Export) using Data Import Export Framework (DIXF)

Purpose: The purpose of this document to illustrate how to implement integration with Microsoft Dynamics AX 2012 based on File Exchange.

Challenge: In certain scenarios you need to implement integration with Microsoft Dynamics AX 2012 by means of File Exchange which is dictated by the software you integrate to or other architectural considerations. For the purposes of integration with Microsoft Dynamics AX 2012 based on File Exchange you can use capabilities provided by Data Import Export Framework (DIXF).

Solution: Data Import Export Framework (DIXF) for Microsoft Dynamics AX 2012 was designed to support a broad array of data migration and integration scenarios. Data Import Export Framework (DIXF) comes with a numerous standard templates for different business entities and it provides capabilities to export data from Microsoft Dynamics AX 2012 into a file which is needed in my scenario. In this article I'll implement integration with Microsoft Dynamics AX 2012 based on File Exchange using Data Import Export Framework (DIXF). My goal for this integration will be to organize continuous data export (CSV data feed) from Microsoft Dynamics AX 2012 with file periodically generated for consumption by external system.

Walkthrough

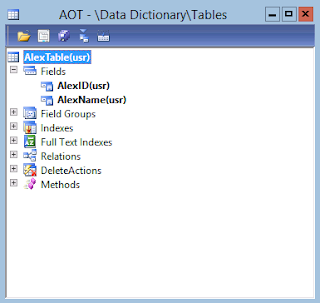

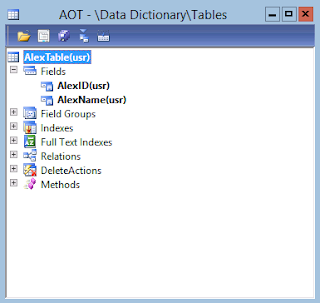

In my scenario I'll be exporting a custom data based on a brand-new data model I've introduced<![if !vml]> <![endif]>

<![endif]>

<![endif]>

<![endif]>

Let's start with defining Source data formats as shown below

Source data formats

Please note that in particular I defined Source data formats for "AX" and "File" which will be used in my scenario. "File" Source data format is set up for CSV (Comma Separated Value) files.

In this particular scenario I'm going to export a custom data that's why I implemented simple DIXF templates which support my custom table

Project

Please see the implementation of DIXF template below

Entity Table

X++

public boolean validateField(FieldId _fieldIdToCheck)

{

return true;

}

|

public boolean validateWrite()

{

return true;

}

|

Entity Class

X++

[DMFAttribute(true)]

class AlexEntityClass extends DMFEntityBase

{

AlexEntity entity;

AlexTable target;

}

|

public container jumpRefMethod(Common _buffer, Object _caller)

{

MenuItemName menuItemName;

menuItemName = menuitemDisplayStr(AlexForm);

entity = _buffer;

return [menuItemName, _buffer];

}

|

public void new(AlexEntity _entity)

{

entity = _entity;

}

|

public void setTargetBuffer(Common _common, Name _dataSourceName = '')

{

switch (_common.TableId)

{

case tableNum(AlexTable) :

target = _common;

break;

}

}

|

public static AlexEntityClass construct(AlexEntity _entity)

{

AlexEntityClass entityClass = new AlexEntityClass(_entity);

return entityClass;

}

|

public static container getReturnFields(Name _entity, MethodName _name)

{

DataSourceName dataSourceName = queryDataSourceStr(AlexTargetEntity, AlexTable);

container con = [dataSourceName];

Name fieldstrToTargetXML(FieldName _fieldName)

{

return DMFTargetXML::findEntityTargetField(_entity ,dataSourceName, _fieldName).xmlField;

}

switch (_name)

{

default :

con = conNull();

}

return con;

}

|

Target Entity Query

When my template is implemented I can now create a Target entity based on Entity Staging Table, Entity Class and Target Entity Query

Target entities

Next step is to create a Processing group and assign appropriate entities to it. Please note that in my scenario I'll have to create two Processing groups: one with Source data format = "AX" and another one with Source data format = "File". The reason for this is because first Processing group will be used to populate Staging table with AX data and the second Processing group will be used to generate a file based on Staging data.

Processing group - AX

Processing group – AX (Entities)

Processing group – AX (Entities - Zoomed in)

Processing group - File

Processing group – File (Entities)

Processing group – File (Entities – Zoomed in)

Please note that for both Processing groups have the same Entity assigned

For File Processing group I'll now generate a sample file which will define how my resulting CSV file will look like

Generate Sample file Wizard - Welcome

Generate Sample file Wizard – Display data

Here's how generated sample file will look like

Sample file

Based on sample file I can now automatically generate Source – Staging mapping

Generate Source Mapping

Modify Source Mapping

To make this scenario more meaningful I'll also introduce some demo data

Demo Data

Now as I have some data we can test out AX Processing group to populate Staging table

Get Staging Data (from AX into Staging table)

Staging data execution

Staging data execution

As the result of execution 3 records will be inserted into Staging table

Infolog

We can now review Execution history

Execution history

And take a look at the Staging data

Staging data

At this point having Staging data we can try to Export this data to file

Export to file

Here we'll select Processing group "File" in order to generate the file

Export to file

Export to file

As the result the data will be exported to file

Infolog

Please note that I populated Staging data by using Processing group "AX", but then used Processing group "File" to generate the file

Resulting file will look like this

File

Great! We got this working!

Alternatively Export to file function is available on Processing group level as shown below

Processing group

Export to file

Export to file

This time I can also explicitly set up Processing group "File" to generate the file

Export to file

Export to file

Resulting file will look like this

File

Please note that when you export to file Processing group you select should logically have a Sample file defined, so the system would know file format for export. This is dictated by the code for lookup as shown below

DMFStagingToSourceFileWriter.dialogDestinationGroupIdLookup method

public void dialogDefinationGroupIdLookup(FormStringControl _executionControl)

{

SysTableLookup tableLookup;

QueryBuildDataSource qbd, qbdEntity;

Query query = new Query();

tableLookup = SysTableLookup::newParameters(tableNum(DMFDefinitionGroupEntity), _executionControl);

tableLookup.addLookupfield(fieldNum(DMFDefinitionGroupEntity, DefinitionGroup), true);

tableLookup.addLookupfield(fieldNum(DMFDefinitionGroupEntity, Source));

qbd = query.addDataSource(tableNum(DMFDefinitionGroupEntity));

qbd.addRange(fieldNum(DMFDefinitionGroupEntity, SampleFilePath)).value(SysQuery::valueNot(''));

qbdEntity = qbd.addDataSource(tableNum(DMFEntity));

qbdEntity.addLink(fieldNum(DMFDefinitionGroupEntity, Entity), fieldNum(DMFEntity, EntityName));

qbdEntity.addRange(fieldNum(DMFEntity, Type)).value(queryValue(this.parmDMFEntityType()));

qbdEntity.addRange(fieldNum(DMFEntity, EntityTable)).value(DMFEntity::find(entityName).EntityTable);

tableLookup.parmQuery(query);

tableLookup.performFormLookup();

}

|

Now let's consider a scenarios when I want to reposition fields in the file

For this purpose I can use Up and Down buttons when I generate a sample file

Generate Sample File Wizard

Then sample file will look like this with new sequence of fields

Sample file

Then we'll generate a mapping from scratch to reflect the change. This is also very important when you are adding a new data elements into a file

Message

As the result out exported file with changed sequence of fields will look like this

File

Now the last thing we want to do is to establish a Batch job for continuous integration (data export) based on the schedule

For this purpose we can set up execution jobs as batch jobs. We'll start with Staging job

Staging – Batch job

Staging status at this point is Waiting

Execution history

Then we'll also try to set up Export data to flat file job as a Batch job right away

Export – Batch job

Staging data shows nothing at this point because we want to execute Staging job in Batch instead of interactive execution

Entity data

For Export job we'll specify Processing group "File"

Export data to flat file

And set it up for Batch execution

Export data to flat file

After execution attempt you may face with the following problem related to Get Staging Data Error. The original error looks like this

Error

Error

System.NullReferenceException: Object reference not set to an instance of an object.

at Microsoft.Dynamics.Ax.Xpp.ReflectionCallHelper.MakeNewObjIntPtr(String typeName, IntPtr intPtr)

at Microsoft.Dynamics.Ax.Xpp.XppObjectBase.callReturn(KernelCallReturnVal returnVal)

at Microsoft.Dynamics.Ax.Xpp.DictTable.Makerecord()

at Dynamics.Ax.Application.DMFEntityWriter.writeToStaging(String _definitionGroup, String _executionId, DMFEntity entity, Int64 _startRefRecId, Int64 _endRefRecId, Boolean _applyChangeTracking, AifChangeTrackingTable _ctCursor, Boolean , Boolean , Boolean , Boolean ) in DMFEntityWriter.writeToStaging.xpp:line 54

at Dynamics.Ax.Application.DMFEntityWriter.writeToStaging(String _definitionGroup, String _executionId, DMFEntity entity, Int64 _startRefRecId, Int64 _endRefRecId, Boolean _applyChangeTracking, AifChangeTrackingTable _ctCursor)

at Dynamics.Ax.Application.DMFStagingWriter.execute(String _executionId, Int64 _batchId, Boolean _runOnService, Boolean _calledFrom, Boolean _applyChangeTracking, AifChangeTrackingTable _ctCursor, Boolean , Boolean , Boolean , Boolean ) in DMFStagingWriter.execute.xpp:line 302

at Dynamics.Ax.Application.DMFStagingWriter.execute(String _executionId, Int64 _batchId, Boolean _runOnService, Boolean _calledFrom, Boolean _applyChangeTracking, AifChangeTrackingTable _ctCursor)

at Dynamics.Ax.Application.DMFStagingWriter.runOnServer(String _executionId, Int64 _batchId, Boolean runOn, Boolean _applyChangeTracking, AifChangeTrackingTable _ctCursor, Boolean , Boolean ) in DMFStagingWriter.runOnServer.xpp:line 22

at Dynamics.Ax.Application.DMFStagingWriter.runOnServer(String _executionId, Int64 _batchId, Boolean runOn, Boolean _applyChangeTracking, AifChangeTrackingTable _ctCursor)

at Dynamics.Ax.Application.DMFStagingWriter.Run() in DMFStagingWriter.run.xpp:line 22

at Dynamics.Ax.Application.BatchRun.runJobStaticCode(Int64 batchId) in BatchRun.runJobStaticCode.xpp:line 54

at Dynamics.Ax.Application.BatchRun.runJobStatic(Int64 batchId) in BatchRun.runJobStatic.xpp:line 13

at BatchRun::runJobStatic(Object[] )

at Microsoft.Dynamics.Ax.Xpp.ReflectionCallHelper.MakeStaticCall(Type type, String MethodName, Object[] parameters)

at BatchIL.taskThreadEntry(Object threadArg)

|

Then we'll also see a derived Export to File Error

Error

Should this happen please check that you have a relationship between DMFExecution table and your table defined as shown below

Relation

Okay, now we should be back in business and we can review our separate Batch jobs: Staging job and Export job

Batch jobs – Staging and Export

Batch tasks - Staging

Batch tasks – Staging (Parameters)

Batch tasks - Export

Batch tasks – Export (Parameters)

Batch tasks – Staging (Log)

Batch tasks – Export (Log)

Great! Here's the file generated after the first Batch execution

File

Next logical thought would be to link those Batch jobs as related Batch jobs tasks of one single Batch job

But when you want to create a Batch job manually and then assign Batch job tasks for Staging job and Export job you will not be able to find appropriate classes in the list of available Batchable classes

Classes

Infolog

The reason for this behavior is because these classes should have canGoBatchJournal method overridden and returning "true". This is to validate that class can be used as a batch task

canGoBatchJournal method

public boolean canGoBatchJournal()

{

return true;

}

|

After I added canGoBatchJournal method returning "true" to DMFStagingWriter (Staging job) class and DMFStagingToSourceFileWriter (Export job) class, they showed up in the list of available batch tasks

Classes

Now I can set up a new Batch job with multiple related Batch tasks to handle end-to-end integration (data retrieval and data export)

Batch job

I can also define a single Recurrence pattern for data export

Recurrence

And most importantly I can define related Batch tasks

Batch tasks – Staging job

Staging job - Parameters

Batch tasks – Export job

Export job – Parameters

After the first execution we'll get the result

File

However after the second and subsequent executions we'll face with issue as shown below

Error

The reason for "File already exists" error is that in DMFStagingWrite.decompressWriteFile method exception is thrown in case the file with this name already exists. Please note that WinServerAPI class is used for Server-side execution (Batch jobs)

DMFStagingWriter.decompressWriteFile method

public server static boolean decompressWriteFile(container _con, str _newFileName, boolean _last = false, boolean _first = false)

{

FileIOPermission fileIOPermission;

BinData outp = new BinData();

fileIOPermission = new FileIOPermission(_newFileName,'r');

fileIOPermission.assert();

if (_first && WinAPIServer::fileExists(_newFileName))

{

throw error(strFmt("@SYS18625",_newFileName));

}

CodeAccessPermission::revertAssert();

outp.setData(_con);

outp.appendToFile(_newFileName);

if (_last)

{

fileIOPermission = new FileIOPermission(_newFileName,'rw');

fileIOPermission.assert();

outp.loadFile(_newFileName);

outp.decompressLZ77();

outp.saveFile(_newFileName);

CodeAccessPermission::revertAssert();

}

return true;

}

|

However if to execute the job interactively on the Client we'll not see the error mentioned above. This is because in similar method for Client-side execution we have the following code

DMFStagingWriter.decompressWriteFileClient method

public client static boolean decompressWriteFileClient(container con, str newFileName, boolean last = false, boolean first = false)

{

FileIOPermission fileIOPermission;

BinData outp = new BinData();

if (first && WinAPI::fileExists(newFileName))

{

WinAPI::deleteFile(newFileName);

}

outp.setData(con);

outp.appendToFile(newFileName);

if (last)

{

fileIOPermission = new FileIOPermission(newFileName,'rw');

fileIOPermission.assert();

outp.loadFile(newFileName);

outp.decompressLZ77();

outp.saveFile(newFileName);

CodeAccessPermission::revertAssert();

}

return true;

}

|

Thus to proceed further I modified DMFStagingWriter.decompressWriteFile method as shown below

DMFStagingWriter.decompressWriteFile method

public server static boolean decompressWriteFile(container _con, str _newFileName, boolean _last = false, boolean _first = false)

{

FileIOPermission fileIOPermission;

BinData outp = new BinData();

fileIOPermission = new FileIOPermission(_newFileName,'r');

fileIOPermission.assert();

if (_first && WinAPIServer::fileExists(_newFileName))

{

//throw error(strFmt("@SYS18625",_newFileName));

WinAPIServer::deleteFile(_newFileName);

}

CodeAccessPermission::revertAssert();

outp.setData(_con);

outp.appendToFile(_newFileName);

if (_last)

{

fileIOPermission = new FileIOPermission(_newFileName,'rw');

fileIOPermission.assert();

outp.loadFile(_newFileName);

outp.decompressLZ77();

outp.saveFile(_newFileName);

CodeAccessPermission::revertAssert();

}

return true;

}

|

Now when we try to execute integration (data export) multiple times we'll have a new file with the same name generated as expected

File

The next thing we'll notice after multiple executions of Staging job will be errors "Cannot create a record in Table. The record already exists"

Error

This happens because when the system executes Staging job multiple times in Batch for the same ExecutionId the Primary key violation occurs upon records insert. Here's how entity table Primary key looks like

Unique index (PK)

In order to resolve this issue I'll want to execute Staging cleanup job available as a part of Data Import Export Framework (DIXF) after or before each iteration of integration (data export)

Staging cleanup job

Staging cleanup job – Batch processing

Please note that at this point the system says that there're no records in staging to be cleaned up

Infolog

This is because you can only clean up fully open, fully closed or error jobs as per DMFStagingCleanup.deleteStagingData method. However our job is by definition a semi-finished job because we are only interested in populating Staging data (AX -> Staging), after that we want to use Export to file function to generate a file. By other words for our job Staging status = "Finished" and Target status = "Not run" which is against the rules defined in DMFStagingCleanup.deleteStagingData method for cleanup activities.

DMFStagingCleanup.deleteStagingData method

public void deleteStagingData()

{

boolean stagingDeleted;

if (!entityName)

{

stagingDeleted = this.deleteBasedOnDefGroupOrExecId(defGroupName, executionId);

}

else

{

while select Entity, StagingStatus, TargetStatus, DefinitionGroup, ExecutionId from dmfDefinitionGroupExecution

where dmfDefinitionGroupExecution.Entity == entityName &&

(dmfDefinitionGroupExecution.StagingStatus == DMFBatchJobStatus::Finished &&

dmfDefinitionGroupExecution.TargetStatus == DMFBatchJobStatus::Finished)

||

(dmfDefinitionGroupExecution.StagingStatus == DMFBatchJobStatus::Finished &&

dmfDefinitionGroupExecution.TargetStatus == DMFBatchJobStatus::Hold)

||

(dmfDefinitionGroupExecution.StagingStatus == DMFBatchJobStatus::Error &&

dmfDefinitionGroupExecution.TargetStatus == DMFBatchJobStatus::Error)

||

(dmfDefinitionGroupExecution.StagingStatus == DMFBatchJobStatus::NotRun &&

dmfDefinitionGroupExecution.TargetStatus == DMFBatchJobStatus::NotRun)

{

if(defGroupName && dmfDefinitionGroupExecution.DefinitionGroup != defGroupName)

continue;

if(executionId && dmfDefinitionGroupExecution.ExecutionId != executionId)

continue;

dmfEntity = DMFEntity::find(entityName);

dictTable = SysDictTable::newName(dmfEntity.EntityTable);

if (dmfEntity)

{

common = dictTable.makeRecord();

delete_from common

where common.(fieldName2id(dictTable.id(), fieldStr(DMFExecution, ExecutionId))) == dmfDefinitionGroupExecution.ExecutionId

&& common.(fieldName2id(dictTable.id(), fieldStr(DMFDefinitionGroupEntity, DefinitionGroup))) == dmfDefinitionGroupExecution.DefinitionGroup;

}

delete_from dmfExecution

where dmfExecution.ExecutionId == dmfDefinitionGroupExecution.ExecutionId;

stagingDeleted = true;

}

}

if (stagingDeleted)

{

info("@DMF381");

}

else

{

info("@DMF712");

}

}

|

In order to successfully clean up Staging data and keep it simple I'll modify DMFStagingCleanup.deleteStagingData method by commenting out clean up qualification rules based on Statuses

DMFStagingCleanup.deleteStagingData method

public void deleteStagingData()

{

boolean stagingDeleted;

if (!entityName)

{

stagingDeleted = this.deleteBasedOnDefGroupOrExecId(defGroupName, executionId);

}

else

{

while select Entity, StagingStatus, TargetStatus, DefinitionGroup, ExecutionId from dmfDefinitionGroupExecution

where dmfDefinitionGroupExecution.Entity == entityName /* &&

(dmfDefinitionGroupExecution.StagingStatus == DMFBatchJobStatus::Finished &&

dmfDefinitionGroupExecution.TargetStatus == DMFBatchJobStatus::Finished)

||

(dmfDefinitionGroupExecution.StagingStatus == DMFBatchJobStatus::Finished &&

dmfDefinitionGroupExecution.TargetStatus == DMFBatchJobStatus::Hold)

||

(dmfDefinitionGroupExecution.StagingStatus == DMFBatchJobStatus::Error &&

dmfDefinitionGroupExecution.TargetStatus == DMFBatchJobStatus::Error)

||

(dmfDefinitionGroupExecution.StagingStatus == DMFBatchJobStatus::NotRun &&

dmfDefinitionGroupExecution.TargetStatus == DMFBatchJobStatus::NotRun) */

{

if(defGroupName && dmfDefinitionGroupExecution.DefinitionGroup != defGroupName)

continue;

if(executionId && dmfDefinitionGroupExecution.ExecutionId != executionId)

continue;

dmfEntity = DMFEntity::find(entityName);

dictTable = SysDictTable::newName(dmfEntity.EntityTable);

if (dmfEntity)

{

common = dictTable.makeRecord();

delete_from common

where common.(fieldName2id(dictTable.id(), fieldStr(DMFExecution, ExecutionId))) == dmfDefinitionGroupExecution.ExecutionId

&& common.(fieldName2id(dictTable.id(), fieldStr(DMFDefinitionGroupEntity, DefinitionGroup))) == dmfDefinitionGroupExecution.DefinitionGroup;

}

delete_from dmfExecution

where dmfExecution.ExecutionId == dmfDefinitionGroupExecution.ExecutionId;

stagingDeleted = true;

}

}

if (stagingDeleted)

{

info("@DMF381");

}

else

{

info("@DMF712");

}

}

|

As the result I'll be able to clean up Staging data even for semi-finished job which is exactly the case in my scenario

Infolog

As a next logical step I'll include Staging cleanup job as another Batch job task into a consolidated Batch job for integration (data export)

Batch tasks

Staging cleanup job - Parameters

Please note that I want Staging job to run only when Staging cleanup job is finished. In this case I can be sure that we avoid a Primary Key duplication exceptions for Entity table upon records insert

Staging job - Condition

At this point if I kick off a consolidated integration Batch job I'll see another exception popping up

Error

This time it is caused by the fact that after we cleaned up Staging data we also deleted DMFExecution record for specific ExecutionId which is used upon subsequent integration executions. DMFExecution records gets automatically deleted based on Delete Action on DMFDefinitionGroupExecution table

DMFDefinitionGroupExecution table – Delete action

And then when we execute Staging cleanup job over and over again the system will be looking for DMFExecution record with specific ExecutionId which does not exist and as the result the system will throw the exception as per DMFStagingCleanup.validate method

DMFStagingCleanup.validate method

public boolean validate(Object calledFrom = null)

{

boolean ret = true;

DMFDefinitionGroup dmfDefinitionGroup;

if (!entityName && !executionId && !defGroupName)

{

throw error("@DMF1498");

}

if(entityName)

{

select firstOnly1 RecId from dmfEntity

where dmfEntity.EntityName == entityName;

if(!dmfEntity.RecId)

{

throw error(strFmt("@DMF370",entityName));

}

}

if(executionId)

{

select firstOnly1 RecId from dmfExecution

where dmfExecution.ExecutionId == executionId;

if(!dmfExecution.RecId)

{

throw error(strFmt("@DMF785",executionId));

}

}

if(defGroupName)

{

select firstOnly1 RecId from dmfDefinitionGroup

where dmfDefinitionGroup.DefinationGroupName == defGroupName;

if(!dmfDefinitionGroup.RecId)

{

throw error(strFmt("@DMF786",defGroupName));

}

}

return ret;

}

|

In order to prevent this error out goal will be to prevent a deletion of DMFExecution record as a part of Staging cleanup job. Thus we're going to have DMFExecution record "header" still in place even when actual staging data relative to this ExecutionId will be deleted/cleaned up. To do so I'll modify DMFStagingCleanup.deleteStagingData method one more time as shown below

DMFStagingCleanup.deleteStagingData method

public void deleteStagingData()

{

boolean stagingDeleted;

if (!entityName)

{

stagingDeleted = this.deleteBasedOnDefGroupOrExecId(defGroupName, executionId);

}

else

{

while select Entity, StagingStatus, TargetStatus, DefinitionGroup, ExecutionId from dmfDefinitionGroupExecution

where dmfDefinitionGroupExecution.Entity == entityName /* &&

(dmfDefinitionGroupExecution.StagingStatus == DMFBatchJobStatus::Finished &&

dmfDefinitionGroupExecution.TargetStatus == DMFBatchJobStatus::Finished)

||

(dmfDefinitionGroupExecution.StagingStatus == DMFBatchJobStatus::Finished &&

dmfDefinitionGroupExecution.TargetStatus == DMFBatchJobStatus::Hold)

||

(dmfDefinitionGroupExecution.StagingStatus == DMFBatchJobStatus::Error &&

dmfDefinitionGroupExecution.TargetStatus == DMFBatchJobStatus::Error)

||

(dmfDefinitionGroupExecution.StagingStatus == DMFBatchJobStatus::NotRun &&

dmfDefinitionGroupExecution.TargetStatus == DMFBatchJobStatus::NotRun) */

{

if(defGroupName && dmfDefinitionGroupExecution.DefinitionGroup != defGroupName)

continue;

if(executionId && dmfDefinitionGroupExecution.ExecutionId != executionId)

continue;

dmfEntity = DMFEntity::find(entityName);

dictTable = SysDictTable::newName(dmfEntity.EntityTable);

if (dmfEntity)

{

common = dictTable.makeRecord();

delete_from common

where common.(fieldName2id(dictTable.id(), fieldStr(DMFExecution, ExecutionId))) == dmfDefinitionGroupExecution.ExecutionId

&& common.(fieldName2id(dictTable.id(), fieldStr(DMFDefinitionGroupEntity, DefinitionGroup))) == dmfDefinitionGroupExecution.DefinitionGroup;

}

/*

delete_from dmfExecution

where dmfExecution.ExecutionId == dmfDefinitionGroupExecution.ExecutionId;

*/

stagingDeleted = true;

}

}

if (stagingDeleted)

{

info("@DMF381");

}

else

{

info("@DMF712");

}

}

|

Then we can kick off a consolidated integration Batch job again to observe the result. To make the experiment even more interesting I also added some more demo data in the table in between Batch job executions. The result will look like this

Execution #1

Execution #2

Please note that after the second execution data export file has more data than after the first execution. That's exactly what I wanted!

Now you can keep a consolidate integration Batch job running and it will generate data export file with updated/actual business data over and over again to ensure a continuous integration flow. At the same time external systems can consume up to date exported business data at the time when needed

After a number of executions we can review a Batch history

Batch history

And drill down to execution details if needed

Execution details

For example, this is how Staging job execution details look like for Execution #1 and Execution #2 I mentioned above

Execution details – Comparison (Execution #1 vs Execution #2)

Execution #1

|

Execution #2

|

|

|

|

Remark: In this article I leveraged Data Import Export Framework (DIXF) to organize data export. Depending on your requirements you can certainly develop a custom X++ script for data export. However please note that in this case you will have to implement file generation logic, data logging, exceptions handling/review, performance-related features (if critical), advanced security (if needed) and other functionalities manually, as opposed to using Data Import Export Framework (DIXF) when all of the above comes with the framework. It is also important to mention that Data Import Export Framework (DIXF) ships with numerous standard templates for various business entities, so you can simply take advantage of those templates instead of developing/re-inventing your own templates to fully realize the benefits of the framework. Please also review other articles in this series which highlight different approaches to the same task and their details

Summary: This document describes how to implement File Exchange data export integration with Microsoft Dynamics AX 2012 using Data Import Export Framework (DIXF). In particular I illustrated experience you would have when using custom DIXF templates and drew your attention to the benefits when using standard DIXF templates. I also focused on several important aspects for continuous integration (data export) such as process automation using batch jobs, generation of files with predefined names, etc.

Tags: Dynamics ERP, Microsoft Dynamics AX 2012, Integration, File Exchange, Data Export, DMF, Data Migration Framework (former name), DIXF, Data Import Export Framework (current name), CSV.

Note: This document is intended for information purposes only, presented as it is with no warranties from the author. This document may be updated with more content to better outline the concepts and describe the examples.

Call to action: If you liked this article please take a moment to share your experiences and interesting scenarios you came across in comments. This info will definitely help me to pick the right topic to highlight in my future blogs

Hi Alex,

ReplyDeleteThanks for a very detailed article on using DIXF for file integration. I'm running into a problem when I follow the article step-by-step.

I just created the first batch job to get staging data and it ran with the System.NullReferenceException. However, when I checked my staging table, it already had a relation with the DMFExecution table on the ExecutionId column.

So, I'm not sure why the batch is failing. Can you help?

Thanks.

Never mind Alex, I found the problem. The problem was that when I ran teh wizard to create the custom entity, it asked for a display menu item. I'd created a menu item, but hadn't specified a value for the object property as I wasn't sure which form it was for.

ReplyDeleteSo, I created a form for the staging table, put its name in the menu item's object property, compiled the projects, generated incremental CIL, re-created the batch job and it ran without a hitch.

Okay, ran into another issue. I added canGoBatchJournal method to DMFStagingWriter and DMFStagingToSourceFileWriter classes, compiled them, did an incremental CIL. The classes still don't show when I try to setup tasks for a manually created batch job.

ReplyDeleteWhat am I missing?

Thanks.

Never mind Alex, I found a solution to the problem. I'd to get out of AX and come back in. That fixed the problem.

ReplyDeleteAlex, I'm stumped again.

ReplyDeleteI'm in the very last step where I create an integration batch job with three tasks.

When I setup the "Export to file" task in the batch job and setup its parameters, the task picks up the name for an earlier execution-Id (I gather this from the name of the file it prompts me to save the file as). Sure enough, when I run the job, it has no data in the output file because I deleted the rows from the earlier execution-id. How can I force the task to point to the execution-id I'm interested in?

Thank you.

Never mind Alex, I figured it out. I've to run the step in interactive mode for it to become available in the batch task setup.

ReplyDeleteHi Alex

ReplyDeleteReally its nice post,I need to do the same thing for the data import can u help to solve the problem Thanks in advance

Hi Alex, I had followed all the steps as illustrated by you. But the issue i am facing is that, output file generated every time from Export batch job, is considering the previous data as well, which i don't need it. Is there any way to flush out previous exported data.

ReplyDeleteHi Alex, by running Cleaning Stage job solved above problem. But now I am getting stuck on new issue that is Output file is getting generated only with one record. Am I missing any relation to add in entity tables. I have added ExecutionId relation as listed in your process.

ReplyDeleteHi Alex, created batchjob with three tasks. on task "DMFStagingToSourceFileWriter" not getting processing group lookup value in parameters. can you please help, did i miss anything.

ReplyDelete